|

To implement a TTS engine, an extension must declare the "ttsEngine" permission and then declare all voices it provides in the extension manifest, like this: )Ĭhrome. An extension could even do something different with the utterances, like display closed captions in a pop-up window or send them as log messages to a remote server. How to fix slow download Google Chrome Increase Chrome speedIn this video, you will learn How to Increase Chrome speed step by step. Extensions are free to use any available web technology to provide speech, including streaming audio from a server, HTML5 audio, Native Client, or Flash.

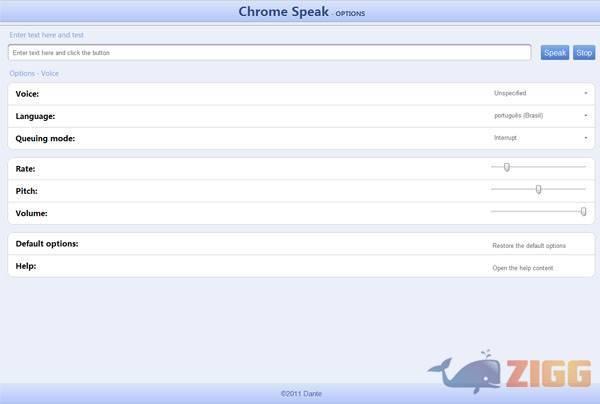

By doing so, it can intercept some or all calls to functions such as tts.speak and tts.stop and provide an alternate implementation. Specified out as part of a interface called SpeechSynthesisGetter, and Implemented by the Window object, the speechSynthesis property provides access to the SpeechSynthesis controller, and therefore the entry point to speech synthesis functionality.An extension can register itself as a speech engine. If you’re not currently logged into your Google account, go ahead and log in now. Represents a voice that the system supports.Įvery SpeechSynthesisVoice has its own relative speech service including information about language, name and URI. Step 1: Open a new Google Docs file Open Google Chrome on your device and head to the Google Docs website. Remove temp files and unwanted addons/Extensions in Chrome and Edge. You can hear full pages read aloud with Chromes built-in screen reader or hear parts of a page, including specific words, read aloud with Select-to-speak. Heres an example with the recognized text appearing almost immediately while speaking. This API allows fine control and flexibility over the speech recognition capabilities in Chrome version 25 and later. You can edit the name that appears under the speed. The new JavaScript Web Speech API makes it easy to add speech recognition to your web pages. A little window will open that lets you edit the URL of the website i.e., the speed dial. You will see a button at the top right corner that doesn’t delete it like before. It contains the content the speech service should read and information about how to read it (e.g. Click on Start menu then search for disc clean up then run as admin, once you see the options to remove choose ok to continue. Open the New Tab page and hover the mouse cursor over one of the speed dial websites. SpeechSynthesisEventĬontains information about the current state of SpeechSynthesisUtterance objects that have been processed in the speech service. SpeechSynthesisErrorEventĬontains information about any errors that occur while processing SpeechSynthesisUtterance objects in the speech service. The controller interface for the speech service this can be used to retrieve information about the synthesis voices available on the device, start and pause speech, and other commands besides. You can get these spoken by passing them to the SpeechSynthesis.speak() method.įor more details on using these features, see Using the Web Speech API. Speech synthesis is accessed via the SpeechSynthesis interface, a text-to-speech component that allows programs to read out their text content (normally via the device's default speech synthesizer.) Different voice types are represented by SpeechSynthesisVoice objects, and different parts of text that you want to be spoken are represented by SpeechSynthesisUtterance objects.

Grammar is defined using JSpeech Grammar Format ( JSGF.) Step 1: Check browser support source : As you can see above, Chrome is the major browser that supports speech to text API, using Google’s speech recognition engines. The SpeechGrammar interface represents a container for a particular set of grammar that your app should recognize. Generally you'll use the interface's constructor to create a new SpeechRecognition object, which has a number of event handlers available for detecting when speech is input through the device's microphone. Making sure that Chrome Management is activated. Speech recognition is accessed via the SpeechRecognition interface, which provides the ability to recognize voice context from an audio input (normally via the device's default speech recognition service) and respond appropriately. Repeat steps 3 and 4 for each organisational unit for which you wish to install Oribi Speak. The Web Speech API makes web apps able to handle voice data.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed